Zenii Architecture

Table of Contents

- System Architecture

- Data Flow

- Crate Dependency Graph

- Project Structure

- Default Paths by OS

- Feature Flag Composition

- Trait-Driven Architecture

- Credential System

- Provider Registry

- Messaging Channels System

- Identity / Soul System

- Skills System

- User Profile + Progressive Learning

- Gateway Routes

- Desktop App Architecture

- Context-Aware Agent System

- Self-Evolving Framework

- Scheduler Notification Flow

- Channel Router Pipeline

- Channel Lifecycle Hooks

- Test Debt and Hardening

- Agent Action Tools

- Autonomous Reasoning Engine

- Semantic Memory and Embeddings

- Phase 18 Hardening

- Workflow Audit Hardening

- Plugin Architecture

- Context-Driven Auto-Discovery

- AgentSelfTool

- OpenAPI Documentation

- Onboarding Flow

- Tool Permission System

- Model Capability Validation

- Agent Delegation

- Workflow Engine

- MCP Integration

- Concurrency Rules

- Lessons Learned from v1

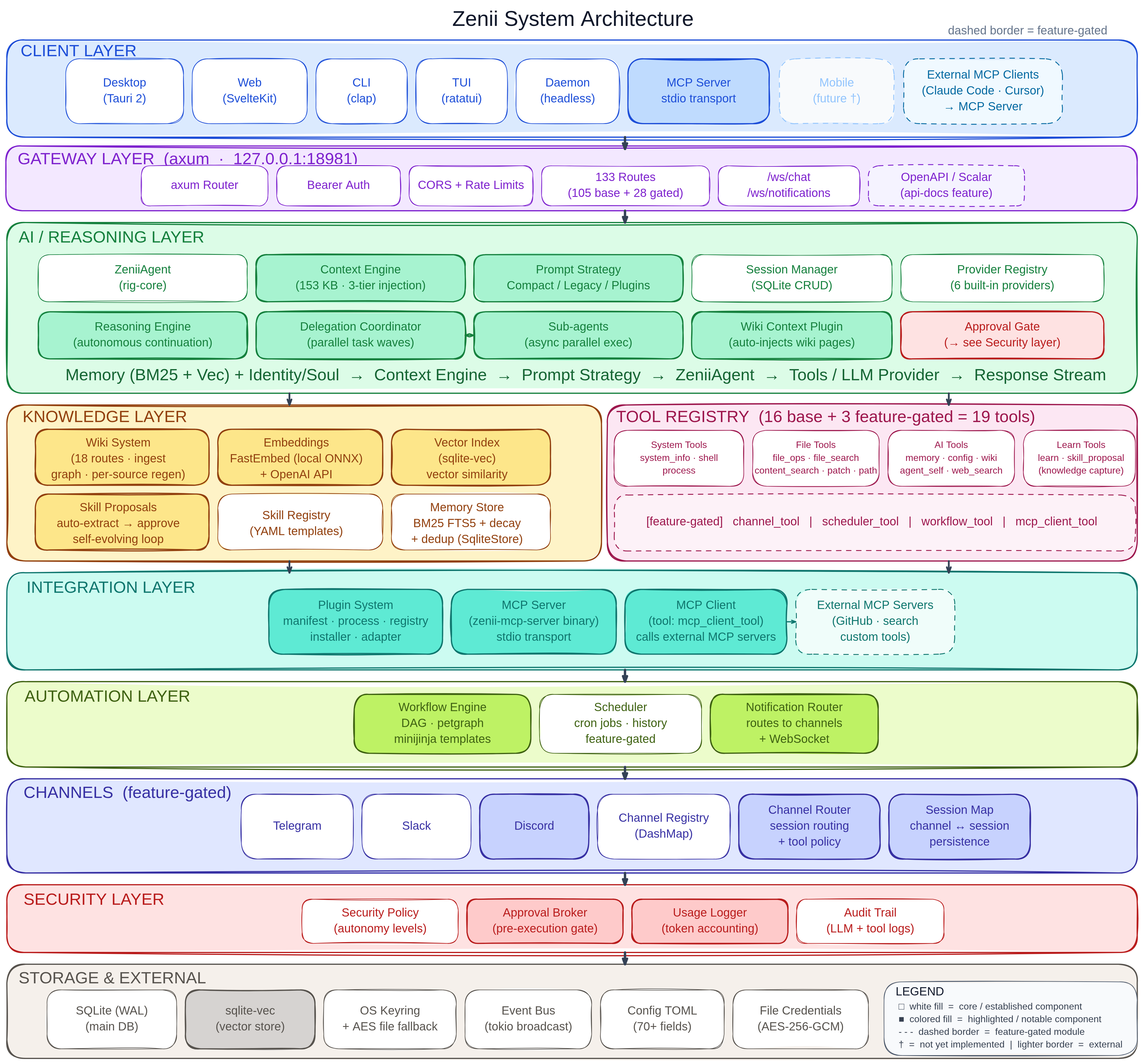

System Architecture

Data Flow

Crate Dependency Graph

Project Structure

zenii/

├── Cargo.toml # Workspace root (5 members)

├── CLAUDE.md # AI assistant instructions

├── README.md # Project documentation

├── scripts/

│ └── build.sh # Cross-platform build script

├── docs/

│ ├── architecture.md # This file

│ └── processes.md # Process flow diagrams

├── crates/

│ ├── zenii-core/ # Shared library (NO Tauri dependency)

│ │ ├── src/

│ │ │ ├── lib.rs # Module exports + Result<T> alias

│ │ │ ├── error.rs # ZeniiError enum (30 variants, thiserror)

│ │ │ ├── boot.rs # init_services() -> Services -> AppState, single boot entry point

│ │ │ ├── config/ # TOML config (schema + load/save + OS paths)

│ │ │ ├── db/ # rusqlite pool + WAL + migrations + spawn_blocking

│ │ │ ├── event_bus/ # EventBus trait + TokioBroadcastBus (13 events)

│ │ │ ├── memory/ # Memory trait + SqliteMemoryStore (FTS5 + vectors) + InMemoryStore

│ │ │ ├── credential/ # CredentialStore trait + KeyringStore + FileCredentialStore + InMemoryCredentialStore

│ │ │ ├── security/ # SecurityPolicy + AutonomyLevel + rate limiter + audit log

│ │ │ ├── tools/ # Tool trait + ToolRegistry (DashMap) + 19 built-in tools (16 base + 3 feature-gated)

│ │ │ ├── ai/ # AI agent (rig-core), providers, session manager, tool adapter, context engine, delegation

│ │ │ │ └── delegation/ # Coordinator, SubAgent, DelegationTask, dependency-wave execution

│ │ │ ├── workflows/ # WorkflowRegistry, WorkflowExecutor, StepRuntime, templates (feature-gated)

│ │ │ ├── gateway/ # axum HTTP+WS gateway (105 base + 28 feature-gated = 133 routes, auth middleware, error mapping, ZENII_VALIDATION)

│ │ │ ├── identity/ # SoulLoader + PromptComposer + defaults (SOUL/IDENTITY/USER.md)

│ │ │ ├── skills/ # SkillRegistry + bundled/user skills (markdown + YAML frontmatter)

│ │ │ ├── user/ # UserLearner + SQLite observations + privacy controls

│ │ │ ├── channels/ # Channel traits + registry + 3 adapters (Telegram/Slack/Discord, feature-gated)

│ │ │ │ ├── mod.rs # Module exports with feature gates

│ │ │ │ ├── traits.rs # Channel, ChannelLifecycle, ChannelSender traits

│ │ │ │ ├── message.rs # ChannelMessage with builder pattern

│ │ │ │ ├── registry.rs # ChannelRegistry (DashMap-backed)

│ │ │ │ ├── protocol.rs # ConnectorFrame wire protocol

│ │ │ │ ├── telegram/ # TelegramChannel + config + formatting

│ │ │ │ ├── slack/ # SlackChannel + API helpers + formatting

│ │ │ │ └── discord/ # DiscordChannel + config

│ │ │ └── scheduler/ # Cron + scheduled tasks, feature-gated (Phase 8)

│ │ └── tests/ # Integration tests

│ ├── zenii-desktop/ # Tauri 2.10 shell (desktop)

│ │ ├── Cargo.toml # tauri 2.10, 4 plugins, devtools feature

│ │ ├── build.rs # tauri_build::build()

│ │ ├── tauri.conf.json # 1280x720, CSP, com.sprklai.zenii

│ │ ├── capabilities/default.json

│ │ ├── icons/ # 7 icon files

│ │ └── src/

│ │ ├── main.rs # Entry + Linux WebKit DMA-BUF fix

│ │ ├── lib.rs # Builder: plugins, tray, IPC, close-to-tray

│ │ ├── commands.rs # 4 IPC + boot_gateway() + 7 tests

│ │ └── tray.rs # Show/Hide/Quit menu + 1 test

│ ├── zenii-cli/ # clap CLI

│ ├── zenii-tui/ # ratatui TUI

│ └── zenii-daemon/ # Headless daemon (full gateway server)

└── web/ # Svelte 5 frontend (SPA)

├── src/

│ ├── app.css # Tailwind v4 + shadcn theme tokens

│ ├── app.html # SPA shell

│ ├── lib/

│ │ ├── api/ # HTTP client + WebSocket manager

│ │ ├── tauri.ts # isTauri detection + 4 invoke wrappers

│ │ ├── components/

│ │ │ ├── ai-elements/ # svelte-ai-elements (9 component sets)

│ │ │ ├── ui/ # shadcn-svelte primitives (14 component sets)

│ │ │ ├── AuthGate.svelte

│ │ │ ├── ChatView.svelte

│ │ │ ├── Markdown.svelte

│ │ │ ├── SessionList.svelte

│ │ │ └── ThemeToggle.svelte

│ │ ├── stores/ # 7 Svelte 5 rune stores ($state, includes channels)

│ │ ├── paraglide/ # i18n (paraglide-js, 8 locales, 577 keys)

│ │ └── utils.ts # shadcn utility helpers

│ └── routes/ # 9 SPA routes

│ ├── +page.svelte # Home

│ ├── chat/+page.svelte # New chat

│ ├── chat/[id]/+page.svelte # Existing session

│ ├── memory/+page.svelte # Memory browser

│ ├── schedule/+page.svelte # Placeholder (Phase 8)

│ ├── settings/+page.svelte # General settings

│ ├── settings/providers/ # Provider config

│ ├── settings/channels/ # Channel credential + connection management

│ └── settings/persona/ # Identity + skills editor

├── package.json

└── vitest.config.ts # 26 unit tests (vitest)

Default Paths by OS

Resolved via directories::ProjectDirs::from("com", "sprklai", "zenii").

Source: crates/zenii-core/src/config/mod.rs

| OS | Config Path | Data Dir / DB Path |

|---|---|---|

| Linux | ~/.config/zenii/config.toml | ~/.local/share/zenii/zenii.db |

| macOS | ~/Library/Application Support/com.sprklai.zenii/config.toml | ~/Library/Application Support/com.sprklai.zenii/zenii.db |

| Windows | %APPDATA%\sprklai\zenii\config\config.toml | %APPDATA%\sprklai\zenii\data\zenii.db |

Override in config.toml:

data_dir = "/custom/data/path" # overrides default data directory

db_path = "/custom/path/zenii.db" # overrides database file directly

Feature Flag Composition

Trait-Driven Architecture

All major subsystems are abstracted behind traits, allowing swappable implementations for testing, migration, and scaling.

All binary crates receive these traits via AppState (Clone + Arc<T>), never concrete types.

Credential System

Per-Binary Keyring Access

| Binary | Keyring Access | Notes |

|---|---|---|

| Desktop | Direct | Tauri 2 has full OS access |

| Mobile | Direct | Tauri 2 mobile has keychain access |

| CLI | Direct | Runs as user process |

| TUI | Via gateway | Connects to daemon over HTTP |

| Daemon | Direct | Headless, runs as service |

Fallback Chain

At boot, the credential store is selected via a three-tier fallback:

- KeyringStore — OS keyring (macOS Keychain, Windows Credential Manager, Linux Secret Service). Preferred when available.

- FileCredentialStore — AES-256-GCM encrypted JSON file at

{data_dir}/credentials.enc. Key derived from SHA-256 of machine characteristics (hostname, username, data_dir, service_id). Activated when keyring is unavailable (e.g., macOS after binary recompilation changes code signature). - InMemoryCredentialStore — Volatile RAM-only store. Last resort when both persistent stores fail.

All credential values are wrapped with zeroize for secure memory cleanup.

Provider Registry

The ProviderRegistry manages AI provider configurations (OpenAI, Anthropic, Gemini, OpenRouter, Vercel AI Gateway, Ollama, and custom providers). It is DB-backed with 6 built-in providers seeded on first boot. Any OpenAI-compatible API endpoint can be added as a custom provider — just supply a base_url and API key.

Credential Key Naming Convention

| Scope | Pattern | Examples |

|---|---|---|

| AI Provider API Keys | api_key:{provider_id} | api_key:openai, api_key:tavily, api_key:brave |

| Channel Credentials | channel:{channel_id}:{field} | channel:telegram:token, channel:slack:bot_token |

Messaging Channels System

The channels module provides trait-based messaging integration with external platforms. Each channel is feature-gated and managed through a concurrent ChannelRegistry.

Feature Flags

| Feature | Depends On | Adds |

|---|---|---|

channels | (none) | Core channel traits + registry + gateway routes |

channels-telegram | channels | TelegramChannel + teloxide dependency |

channels-slack | channels | SlackChannel (uses existing reqwest/tungstenite) |

channels-discord | channels | DiscordChannel + serenity dependency |

workflows | (none) | WorkflowRegistry + WorkflowExecutor + petgraph + minijinja + 7 gateway routes |

Identity / Soul System

Identity defines the AI assistant's personality, tone, and behavior through 3 markdown files with YAML frontmatter. All prompt content comes from .md files — zero hardcoded prompt strings in Rust code.

Identity File Format (IDENTITY.md)

---

name: Zenii

version: "2.0"

description: AI-powered assistant

---

# Identity details...

- Storage:

data_dir/identity/(configurable viaidentity_dirin config.toml) - Bundled defaults: embedded via

include_str!()at compile time, written to disk on first run - Reload: manual via

POST /identity/reloadendpoint (nonotifydependency) - API:

GET /identity,GET /identity/{name},PUT /identity/{name},POST /identity/reload

Skills System

Skills are instructional markdown documents loaded into the agent's context. They follow the Claude Code model — pure markdown with YAML frontmatter metadata, no parameter substitution.

Skill File Format (Claude Code model)

---

name: system-prompt

description: Generates effective system prompts for AI agents

category: meta

---

# System Prompt Generator

When creating system prompts, follow these principles:

...

- No Tera/comrak: Skills are pure markdown context documents, not parameterized templates

- 2 tiers: Bundled (compile-time) + User (disk). User skills with same id override bundled.

- API:

GET /skills,GET /skills/{id},POST /skills,PUT /skills/{id},DELETE /skills/{id},POST /skills/reload - Bundled skills cannot be deleted — only user skills support DELETE

User Profile + Progressive Learning

Zenii learns user preferences over time via explicit observation API. Observations are stored in SQLite with category-based organization and confidence scoring.

- USER.md: static user context template (part of identity system)

- UserLearner: SQLite-backed observation store with CRUD operations

- Observations: stored in

user_observationstable with category, key, value, confidence, timestamps - Privacy: learning toggled via config, denied categories block specific observation types, TTL auto-expires old observations

- API:

GET /user/observations,POST /user/observations,GET /user/observations/{key},DELETE /user/observations/{key},DELETE /user/observations,GET /user/profile

Context-Aware Agent System

The context engine provides 3-tier adaptive context injection that reduces token usage while keeping the agent contextually grounded.

Context Level Determination

| Condition | Level | Content |

|---|---|---|

| New session (0 messages) | Full | Boot + runtime + identity + user + capabilities |

| Continuing (recent messages, within gap) | Minimal | One-liner: "Zenii — AI assistant | date | OS | model" |

| Gap exceeded (> N minutes since last msg) | Full | Same as new session |

| Message count threshold exceeded | Full | Same as new session |

| Resumed session with prior messages | Summary | Full + prior conversation summary |

| Toggle disabled | Fallback | Config agent_system_prompt or default preamble |

Prompt Strategy System

The prompt strategy system (Phase 8.13) replaces the dual-compose pipeline with a plugin-based architecture that reduces preamble tokens by ~65%:

PromptStrategyRegistry (implements PromptStrategy)

├── base: CompactStrategy or LegacyStrategy

│ └── Layers 0 + 1 + 3 (identity, runtime, overrides)

└── plugins: Vec<Arc<dyn PromptPlugin>>

├── MemoryPlugin (always)

├── UserObservationsPlugin (always)

├── SkillsPlugin (always)

├── LearnedRulesPlugin (if self_evolution)

├── ChannelContextPlugin (feature: channels)

└── SchedulerContextPlugin (feature: scheduler)

Handlers call state.prompt_strategy.assemble(&AssemblyRequest) -- a single entry point that:

- Base strategy produces Layer 0 (identity), Layer 1 (runtime), Layer 3 (overrides)

- Plugins contribute Layer 2 fragments with domain filtering and priority

- Registry merges all fragments and applies token budget trimming

Config: prompt_compact_identity (default true) selects CompactStrategy vs LegacyStrategy. prompt_max_preamble_tokens (default 1500) controls the overflow budget.

DB Schema (migration v5)

context_summaries— cached AI-generated summaries with hash-based change detectionskill_proposals— human-in-the-loop skill change approval workflowsessions.summary— conversation summary column for session resume

Self-Evolving Framework

The agent can learn user preferences and propose skill changes, all subject to human approval.

Gateway Routes

All clients communicate via the HTTP+WebSocket gateway at localhost:18981. Routes are grouped by subsystem (105 base + 28 feature-gated = 133 total).

Health (1 route, no auth)

| Method | Path | Description |

|---|---|---|

| GET | /health | Health check |

Sessions & Chat (10 routes)

| Method | Path | Description |

|---|---|---|

| POST | /sessions | Create new chat session |

| GET | /sessions | List all sessions |

| GET | /sessions/{id} | Get session details |

| PUT | /sessions/{id} | Update session |

| DELETE | /sessions/{id} | Delete session |

| POST | /sessions/{id}/generate-title | Auto-generate session title via AI |

| GET | /sessions/{id}/messages | Get messages for a session |

| POST | /sessions/{id}/messages | Send message to session |

| DELETE | /sessions/{id}/messages/{message_id}/and-after | Delete message and all after it |

Chat (1 route)

| Method | Path | Description |

|---|---|---|

| POST | /chat | Chat with AI agent |

Memory (5 routes)

| Method | Path | Description |

|---|---|---|

| POST | /memory | Create memory entry |

| GET | /memory | Recall/search memories |

| GET | /memory/{key} | Get memory by key |

| PUT | /memory/{key} | Update memory by key |

| DELETE | /memory/{key} | Delete memory by key |

Configuration (3 routes)

| Method | Path | Description |

|---|---|---|

| GET | /config | Get current configuration (auth token redacted) |

| PUT | /config | Update configuration |

| GET | /config/file | Get raw config file content |

Setup / Onboarding (1 route)

| Method | Path | Description |

|---|---|---|

| GET | /setup/status | Check if first-run setup is needed (missing location/timezone) |

Credentials (5 routes)

| Method | Path | Description |

|---|---|---|

| POST | /credentials | Set a credential (key + value) |

| GET | /credentials | List all credential keys (values hidden) |

| DELETE | /credentials/{key} | Delete a credential |

| GET | /credentials/{key}/value | Get credential value (explicit retrieval) |

| GET | /credentials/{key}/exists | Check if credential exists |

Providers & Models (12 routes)

| Method | Path | Description |

|---|---|---|

| GET | /providers | List all providers |

| POST | /providers | Create user-defined provider |

| GET | /providers/with-key-status | List providers with API key status |

| GET | /providers/default | Get default model |

| PUT | /providers/default | Set default model |

| GET | /providers/{id} | Get provider details |

| PUT | /providers/{id} | Update provider |

| DELETE | /providers/{id} | Delete user-defined provider |

| POST | /providers/{id}/test | Test provider connection (with latency) |

| POST | /providers/{id}/models | Add model to provider |

| DELETE | /providers/{id}/models/{model_id} | Delete model from provider |

| GET | /models | List all available models across providers |

Tools (2 routes)

| Method | Path | Description |

|---|---|---|

| GET | /tools | List available tools |

| POST | /tools/{name}/execute | Execute a tool by name |

Permissions (4 routes)

| Method | Path | Description |

|---|---|---|

| GET | /permissions | List all known surfaces (desktop, cli, tui, telegram, slack, discord) |

| GET | /permissions/{surface} | List tool permissions for a surface |

| PUT | /permissions/{surface}/{tool} | Set a permission override for a tool on a surface |

| DELETE | /permissions/{surface}/{tool} | Remove an override (fall back to risk-level default) |

System (1 route)

| Method | Path | Description |

|---|---|---|

| GET | /system/info | System information |

WebSocket Channels (1 route)

| Path | Description |

|---|---|

/ws/chat | Streaming chat responses |

Identity (4 routes)

| Method | Path | Description |

|---|---|---|

| GET | /identity | List all identity files |

| GET | /identity/{name} | Get identity file content |

| PUT | /identity/{name} | Update identity file content |

| POST | /identity/reload | Force reload all identity files |

Skills (6 routes)

| Method | Path | Description |

|---|---|---|

| GET | /skills | List all skills (optional ?category= filter) |

| GET | /skills/{id} | Get full skill definition |

| POST | /skills | Create user skill |

| PUT | /skills/{id} | Update skill content |

| DELETE | /skills/{id} | Delete user skill (bundled cannot be deleted) |

| POST | /skills/reload | Force reload all skills |

Skill Proposals (4 routes)

| Method | Path | Description |

|---|---|---|

| GET | /skills/proposals | List pending skill proposals |

| POST | /skills/proposals/{id}/approve | Approve and execute a proposal |

| POST | /skills/proposals/{id}/reject | Reject a proposal |

| DELETE | /skills/proposals/{id} | Delete a proposal |

User Profile + Learning (6 routes)

| Method | Path | Description |

|---|---|---|

| GET | /user/observations | List observations (optional ?category= filter) |

| POST | /user/observations | Add observation |

| GET | /user/observations/{key} | Get observation by key |

| DELETE | /user/observations/{key} | Delete observation by key |

| DELETE | /user/observations | Clear all observations |

| GET | /user/profile | Get computed user context string |

Channels (10 routes, 9 feature-gated)

| Method | Path | Feature | Description |

|---|---|---|---|

| POST | /channels/{name}/test | always | Test channel credentials |

| GET | /channels | channels | List registered channels with status |

| GET | /channels/{name}/status | channels | Get channel status |

| POST | /channels/{name}/send | channels | Send message via channel |

| POST | /channels/{name}/connect | channels | Connect channel |

| POST | /channels/{name}/disconnect | channels | Disconnect channel |

| GET | /channels/{name}/health | channels | Health check |

| POST | /channels/{name}/message | channels | Webhook message endpoint |

| GET | /channels/sessions | channels | List channel sessions |

| GET | /channels/sessions/{id}/messages | channels | List channel session messages |

Scheduler (7 routes, feature-gated)

| Method | Path | Description |

|---|---|---|

| POST | /scheduler/jobs | Create scheduled job |

| GET | /scheduler/jobs | List all jobs |

| PUT | /scheduler/jobs/{id} | Update job |

| DELETE | /scheduler/jobs/{id} | Delete job |

| PUT | /scheduler/jobs/{id}/toggle | Toggle job enabled/disabled |

| GET | /scheduler/jobs/{id}/history | Get job execution history |

| GET | /scheduler/status | Scheduler status |

Embeddings (5 routes)

| Method | Path | Description |

|---|---|---|

| GET | /embeddings/status | Current embedding provider and model info |

| POST | /embeddings/test | Test embedding generation |

| POST | /embeddings/embed | Embed arbitrary text |

| POST | /embeddings/download | Download local embedding model |

| POST | /embeddings/reindex | Re-embed all stored memories |

Plugins (9 routes)

| Method | Path | Description |

|---|---|---|

| GET | /plugins | List all installed plugins |

| POST | /plugins/install | Install plugin from git URL or local path |

| GET | /plugins/available | List available plugins from registry |

| DELETE | /plugins/{name} | Remove installed plugin |

| GET | /plugins/{name} | Get plugin info and manifest |

| PUT | /plugins/{name}/toggle | Enable or disable a plugin |

| POST | /plugins/{name}/update | Update plugin to latest version |

| GET | /plugins/{name}/config | Get plugin configuration |

| PUT | /plugins/{name}/config | Update plugin configuration |

Agent Delegation (2 routes)

| Method | Path | Description |

|---|---|---|

| GET | /agents/active | List active delegation runs |

| POST | /agents/{id}/cancel | Cancel a delegation run |

Approvals (3 routes)

| Method | Path | Description |

|---|---|---|

| GET | /approvals/rules | List approval rules |

| DELETE | /approvals/rules/{id} | Delete an approval rule |

| POST | /approvals/{id}/respond | Respond to a pending approval |

Workflows (10 routes, feature-gated)

| Method | Path | Feature | Description |

|---|---|---|---|

| POST | /workflows | workflows | Create workflow from TOML |

| GET | /workflows | workflows | List all workflows |

| GET | /workflows/{id} | workflows | Get workflow definition |

| PUT | /workflows/{id} | workflows | Update workflow definition |

| DELETE | /workflows/{id} | workflows | Delete workflow |

| GET | /workflows/{id}/raw | workflows | Get raw TOML source |

| POST | /workflows/{id}/run | workflows | Execute workflow |

| POST | /workflows/{id}/cancel | workflows | Cancel running workflow |

| GET | /workflows/{id}/history | workflows | Get run history |

| GET | /workflows/{id}/runs/{run_id} | workflows | Get run details with step results |

WebSocket Endpoints (2 routes)

| Path | Feature | Description |

|---|---|---|

/ws/chat | always | Streaming chat responses |

/ws/notifications | always | Push notifications to clients |

API Docs (2 routes, feature-gated)

| Method | Path | Feature | Description |

|---|---|---|---|

| GET | /api-docs | api-docs | Scalar interactive documentation UI |

| GET | /api-docs/openapi.json | api-docs | OpenAPI 3.1 JSON specification |

Desktop App Architecture

The desktop app is a Tauri 2.10 shell wrapping the SvelteKit SPA frontend. It embeds the gateway server by default, so no separate daemon process is required.

Tauri Plugins

| Plugin | Version | Purpose |

|---|---|---|

| tray-icon | built-in | System tray with Show/Hide/Quit menu |

| window-state | 2.4.1 | Persist window size, position, maximized state |

| single-instance | 2.4.0 | Enforce single running instance, focus existing |

| opener | 2.5.3 | Open data directory in OS file manager |

| devtools | 2.0.1 | WebView inspector (feature-gated, dev only) |

IPC Commands

| Command | Description |

|---|---|

close_to_tray | Hide window to system tray |

show_window | Show and focus the main window |

get_app_version | Return app version string |

open_data_dir | Open Zenii data directory in OS file manager |

Desktop Boot Flow

Hybrid Gateway Architecture

The desktop app supports two gateway modes:

-

Embedded (default): The gateway server starts in a background Tokio task during

setup(). Aoneshotchannel provides graceful shutdown. This is the zero-configuration path -- users launch the desktop app and everything works. -

External: If

ZENII_GATEWAY_URLis set to a valid URL, the desktop app connects to an external daemon instead of starting its own gateway. Useful for multi-device setups or when running the daemon as a system service.

Frontend Integration

The frontend detects the Tauri environment via window.__TAURI__ and provides typed wrappers in web/src/lib/tauri.ts:

isTauri-- boolean flag for environment detectioncloseToTray()-- invokeclose_to_trayIPC commandshowWindow()-- invokeshow_windowIPC commandgetAppVersion()-- invokeget_app_versionIPC commandopenDataDir()-- invokeopen_data_dirIPC command

All wrappers are no-ops when running in a browser (non-Tauri) context, so the same frontend works for both desktop and web.

Frontend i18n

- paraglide-js v2 for compile-time, type-safe translations

- 8 locales auto-detected from

project.inlang/settings.json(EN, ZH, ES, JA, HI, PT, KO, FR) - Locale store (

locale.svelte.ts) mirrors theme store pattern messages/{locale}.jsonflat-key files with_meta_labelfor native names- 577 message keys across 40+ components

- Language switcher in Settings > General

Scheduler Notification Flow (Stage 8.6.1)

The PayloadExecutor (scheduler/payload_executor.rs) handles 4 payload types dispatched by the scheduler tick loop. The TokioScheduler and AppState have a circular dependency resolved via OnceCell — the scheduler is constructed first, then wired to AppState post-construction via wire().

Key Design Decisions

| Decision | Rationale |

|---|---|

| OnceCell wiring | TokioScheduler needs AppState for agent/channel access, but AppState contains the scheduler — OnceCell breaks the cycle |

WS /ws/notifications | Dedicated endpoint for push notifications, separate from /ws/chat |

| svelte-sonner toasts | Frontend subscribes to WS notifications and displays via toast library |

| tauri-plugin-notification | Desktop OS-level notifications when app is in tray |

Channel Router Pipeline (Stage 8.7)

The ChannelRouter struct orchestrates the full message processing pipeline from inbound channel message to outbound response. It runs as a background task with an mpsc receiver and watch stop signal.

Gateway Integration

| Route | Description |

|---|---|

POST /channels/{name}/message | Webhook endpoint — injects message into ChannelRouter pipeline |

Frontend: Session Source

Channel-originated sessions carry a source field displayed as a platform badge (Telegram/Slack/Discord icon) in the session list UI.

Channel Lifecycle Hooks (Stage 8.8)

Lifecycle hooks run at key points in the ChannelRouter pipeline. They are best-effort — failures are logged but do not block the pipeline.

| Platform | on_agent_start | on_tool_use | on_agent_complete |

|---|---|---|---|

| Telegram | Send status message | Refresh typing indicator (4s) | Stop typing refresh |

| Slack | Post ephemeral "thinking..." | Update ephemeral message | Delete ephemeral message |

| Discord | Start typing indicator | (no-op) | (typing auto-expires) |

Test Debt and Hardening (Stage 8.9)

Stage 8.9 addressed test coverage gaps and hardened critical modules.

ProcessTool Kill Action

The ProcessTool gained a kill action using sysinfo-based process lookup. Kill requires Full autonomy level — lower autonomy levels are denied with ZeniiError::PolicyDenied.

Context Engine Tests (52 tests)

Comprehensive unit test coverage for:

ContextEngine— level determination, compose output, config togglesBootContext— OS/arch/hostname/locale detection- Context summaries — hash-based cache invalidation, DB storage/retrieval

- Tier injection — Full/Minimal/Summary content verification

Agent Tool Loop Tests (5 tests)

Integration tests verifying RigToolAdapter dispatch — agent correctly invokes tools during the chat loop and feeds results back to the LLM.

Agent Action Tools (Phase 8.10)

Four new agent-callable tools give the AI agent direct control over system functions:

Autonomous Reasoning Engine (Phase 8.11)

The ReasoningEngine provides an extensible pipeline for autonomous multi-step agent operation, with per-request tool call deduplication to prevent redundant API calls:

Key components:

- ReasoningEngine -- orchestrates agent calls with pluggable strategy pipeline

- ToolCallCache -- per-request

DashMap<u64, CachedResult>keyed byhash(tool_name + args_json). Shared across allRigToolAdapters viaArc. Caches both successes and errors. Tracks execution count viaAtomicU32. Controlled bytool_dedup_enabledconfig (defaulttrue) - ContinuationStrategy -- tool-aware continuation detection. If

tool_calls_made > 0, skips the text heuristic entirely (prevents false positives like "Let me tell you about..."). Falls back to planning/refusal language detection only when no tools were called. Respectsagent_max_continuationslimit (default1) - BootContext -- system environment discovery (OS, arch, hostname, home dir, desktop, downloads, shell, username)

Deduplication defaults

| Config | Default | Range | Description |

|---|---|---|---|

agent_max_turns | 8 | 1-32 | Max rig-core agentic turns per agent.chat() |

agent_max_continuations | 1 | 0-5 | Max ReasoningEngine continuation rounds |

tool_dedup_enabled | true | -- | Enable per-request tool call cache |

Semantic Memory and Embeddings (Phase 8.11)

Hybrid search combining FTS5 full-text search with vector similarity:

Gateway embedding routes (5):

GET /embeddings/status-- current provider and model infoPOST /embeddings/test-- test embedding generationPOST /embeddings/embed-- embed arbitrary textPOST /embeddings/download-- download local modelPOST /embeddings/reindex-- re-embed all stored memories

Memory Quality Improvements

Three quality enhancements applied at the SqliteMemoryStore layer:

BM25 Field Weighting (M5)

FTS5 query uses per-field BM25 weights so key matches rank higher than content or category:

bm25(memories_fts, 2.0, 1.0, 0.5)

-- key=2.0, content=1.0, category=0.5

Configurable via memory_bm25_key_weight, memory_bm25_content_weight, memory_bm25_category_weight.

Temporal Decay (M1)

Recall scores are multiplied by an exponential decay factor based on how many days have passed since the memory was last updated:

final_score = raw_score × exp(-λ × days_since_update)

Default λ=0.01 gives approximately a 70-day half-life. Configurable via memory_decay_enabled (default true) and memory_decay_lambda (default 0.01).

Semantic Deduplication (M4)

Before inserting a new memory, store() checks whether any existing entry has a vector cosine similarity ≥ threshold. If so, the existing entry's content is updated in-place instead of creating a duplicate. Configurable via memory_dedup_enabled (default true) and memory_dedup_threshold (default 0.92). Only active when an embedding provider is configured.

Phase 18 Hardening

Phase 18 addressed 51 issues from two code audits across 8 parallel work streams:

- ArcSwap config -- runtime config hot-reload via

arc_swap::ArcSwap<AppConfig>replacing manual TOML write + reload - Security -- CORS origin validation improvements, path traversal protection in file tools

- Concurrency -- eliminated data races in scheduler, security, and tools modules

- Channel reliability -- UTF-8 safe message splitting, Slack echo loop prevention

- Frontend -- svelte-check warnings reduced from 19 to 0

- CI/CD -- all-features testing added to CI pipeline

Workflow Audit Hardening

A whole-app workflow audit addressing security, agent safety, session lifecycle, event bus hygiene, and frontend resilience. 16 new tests added (1,306 total).

Security Hardening

9 additional commands added to BLOCKED_COMMANDS in security/policy.rs: eval, exec, nc, ncat, socat, docker, systemctl, xdg-open, open. Pipe-to-shell patterns (e.g., curl | sh) were already caught by | in INJECTION_PATTERNS.

Agent Execution Safety

Key changes in gateway/handlers/ws.rs:

- Agent timeout:

tokio::time::timeout()with configurableagent_timeout_secs(default 300s) - Client disconnect abort:

JoinHandlestored,tokio::select!detects WS close and aborts the agent task - Tool event lag handling: when

tool_rxlags, sendsWsOutbound::Warningwith count of dropped events - DB persistence retry: one retry after 100ms on failure,

WsOutbound::Warningsent to client on final failure

Session Lifecycle

SessionManager::cleanup_old_sessions() added in ai/session.rs. Deletes sessions older than session_max_age_days (default 90). Runs automatically on boot during init_services().

Event Bus Cleanup

- 10 never-published

AppEventvariants removed fromevent_bus/mod.rs:SessionCreated,SessionDeleted,MessageReceived,StreamChunk,StreamDone,ToolExecutionStarted,ToolExecutionCompleted,ProviderChanged,MemoryStored,GatewayStarted - Event bus capacity now reads from

config.event_bus_capacity(default 256) instead of being hardcoded

Notification Routing

heartbeat_alert field added to NotificationRouting (backend routing.rs + frontend notifications.svelte.ts). Frontend now uses hasTarget("heartbeat_alert", ...) instead of piggybacking on scheduler_job_completed.

Frontend Resilience

activeToolCallsarray capped at 50 entries inmessages.svelte.ts- Session store retry replaced with exponential backoff (3 attempts: 1s, 2s, 4s) in

sessions.svelte.ts

Scheduler Validation

add_job() validates start_hour != end_hour in active hours configuration (tokio_scheduler.rs).

New Config Fields

| Field | Type | Default | Description |

|---|---|---|---|

agent_timeout_secs | u64 | 300 | Maximum seconds for agent execution before timeout |

event_bus_capacity | usize | 256 | Capacity of the tokio broadcast event bus |

session_max_age_days | u32 | 90 | Days before old sessions are cleaned up on boot |

Plugin Architecture (Phase 9)

Plugin Lifecycle

- Discovery: On boot,

PluginRegistryscansplugins_dirfor installed plugins - Registration: Each plugin's tools are wrapped in

PluginToolAdapterand registered inToolRegistry - Execution: When a tool is called,

PluginProcessspawns the plugin binary, communicates via JSON-RPC 2.0 over stdio - Recovery: Crashed plugins are automatically restarted up to

plugin_max_restart_attemptstimes - Idle Shutdown: Inactive plugin processes are terminated after

plugin_idle_timeout_secs

Client Interfaces

Plugin management is available across all interfaces:

- CLI:

zenii plugin <cmd>(list, install, remove, update, enable, disable, info) -- HTTP calls to gateway - Web/Desktop:

PluginsSettings.sveltecomponent with full install/remove/enable/disable UI viapluginsStore - TUI:

PluginListmode (presspfrom session list) with keybindings:j/knavigate,etoggle enable/disable,dremove,iinstall,rrefresh,Escback

Plugin Manifest Format (plugin.toml)

[plugin]

name = "weather"

version = "1.0.0"

description = "Weather forecast tool"

author = "example"

[permissions]

network = true

filesystem = false

[[tools]]

name = "get_weather"

binary = "weather-tool"

description = "Get weather for a location"

[[skills]]

name = "weather-prompt"

file = "skills/weather.md"

Context-Driven Auto-Discovery

The context engine automatically detects which feature domains are relevant to the user's message and injects only pertinent context and agent rules.

Domain Detection

Domain-to-Category Mapping

| Domain | Agent Rule Category |

|---|---|

| Channels | channel |

| Scheduler | scheduling |

| Skills / Tools | tool_usage |

| Always included | general |

Key files: ai/context.rs (ContextDomain enum, detect_relevant_domains(), domains_to_rule_categories())

AgentSelfTool

The agent_notes tool allows the agent to learn, recall, and forget behavioral rules that persist across conversations and get auto-injected into context.

Data Model

- Table:

agent_rules(DB migration v10) - Schema:

id,content,category,created_at,active - Categories:

general,channel,scheduling,user_preference,tool_usage

Tool Actions

| Action | Description | Required Params |

|---|---|---|

learn | Create a new behavioral rule | content, optional category |

rules | List active rules | optional category filter |

forget | Soft-delete a rule by ID | id |

Integration

Control: Gated by self_evolution_enabled config flag (runtime toggle via Arc<AtomicBool>).

Key file: tools/agent_self_tool.rs

OpenAPI Documentation

Interactive API documentation via Scalar UI, feature-gated behind api-docs.

Stack

- utoipa -- OpenAPI 3.1 spec generation from Rust handler annotations

- scalar -- Interactive documentation UI served at

/api-docs - Feature gate:

api-docs(enabled by default in daemon and desktop)

Endpoints

| Path | Description |

|---|---|

GET /api-docs | Scalar interactive UI |

GET /api-docs/openapi.json | Raw OpenAPI 3.1 JSON spec |

Build

The spec is assembled at runtime from #[utoipa::path] annotations on handler functions. Feature-gated handlers (channels, scheduler) are conditionally merged into the spec.

Key file: gateway/openapi.rs

Onboarding Flow

Multi-step onboarding wizard that collects AI provider setup (provider selection, API key, model), optional channel credentials (Telegram, Slack, Discord), and user profile (name, location, timezone). Available across Desktop, CLI, and TUI interfaces.

SetupStatus

The check_setup_status() function (in onboarding.rs) determines whether onboarding is needed:

needs_setup: bool-- true ifuser_name,user_location, or API key is missingmissing: Vec<String>-- list of missing fields (e.g.,["user_name", "api_key"])detected_timezone: Option<String>-- auto-detected IANA timezone viaiana-time-zonecratehas_usable_model: bool-- true if at least one provider has a stored API key

Desktop (OnboardingWizard)

CLI (Interactive Flow)

The zenii onboard command runs an interactive onboarding:

- Fetch providers from

GET /providers/with-key-status - User selects provider via

dialoguer::Select - Prompt for API key via

dialoguer::Password, save toPOST /credentials - Refresh providers to get updated models

- User selects model, set default via

PUT /providers/default - Optional:

dialoguer::Confirmto set up a messaging channel (Telegram/Slack/Discord), save credentials toPOST /credentials - Prompt for name, location, timezone (auto-detected default)

- Save profile to

PUT /config

TUI (5-Step Overlay Modal)

Centered ratatui modal (60% x 70%) with step indicator:

- ProviderSelect -- list providers, j/k navigate, Enter select

- ApiKey -- masked password input, Enter save, Esc back

- ModelSelect -- list models for selected provider, Enter select

- Channels (optional) -- Tab to switch between Telegram/Slack/Discord, j/k navigate credential fields, Enter save, s to skip

- Profile -- three text fields (Name/Location/Timezone), Tab switch, Enter save

Detection

- Timezone (server):

iana-time-zonecrate (Rust) -- returned inSetupStatus.detected_timezone - Timezone (browser):

Intl.DateTimeFormat().resolvedOptions().timeZone-- fallback in AuthGate - Location: Manual user input (e.g., "Toronto, Canada")

Config Fields

user_name: Option<String>-- display name for greetingsuser_timezone: Option<String>-- IANA format (e.g., "America/New_York")user_location: Option<String>-- human-readable (e.g., "New York, US")

Key files: onboarding.rs, gateway/handlers/config.rs (setup_status), web/src/lib/components/OnboardingWizard.svelte, web/src/lib/components/AuthGate.svelte, crates/zenii-cli/src/commands/onboard.rs, crates/zenii-tui/src/ui/onboard.rs

LLM-Based Auto Fact Extraction

Automatically extracts structured facts about the user from conversation exchanges and persists them via UserLearner::observe(). Fire-and-forget design -- errors are logged, never propagated to the user.

Flow

Extraction Prompt

The LLM receives the user prompt and assistant response, asked to extract facts in category|key|value format (one per line). Valid categories: preference, knowledge, context, workflow. If no meaningful facts, the LLM outputs NONE.

Config Fields

| Field | Type | Default | Purpose |

|---|---|---|---|

context_auto_extract | bool | true | Enable/disable fact extraction |

context_extract_interval | usize | 3 | Extract every N messages |

context_summary_provider_id | String | "openai" | LLM provider for extraction |

context_summary_model_id | String | "gpt-4o-mini" | LLM model for extraction |

Integration Points

- HTTP chat (

gateway/handlers/chat.rs): called afterreasoning_engine.chat(), before storing assistant message - WebSocket chat (

gateway/handlers/ws.rs): called after streaming completes

Key files: ai/context.rs (ContextBuilder::extract_facts), user/learner.rs (UserLearner::observe), config/schema.rs

Tool Permission System (Phase 19)

Per-surface, risk-based tool permission system. Each tool declares a RiskLevel (Low, Medium, High) via the Tool trait. Permissions are resolved hierarchically: per-surface per-tool override > risk-level default.

Risk Level Defaults

| Risk Level | Default | Examples |

|---|---|---|

| Low | Allowed | web_search, system_info |

| Medium | Allowed | config, learn, memory, skill_proposal, agent_self, channel_send, scheduler |

| High | Denied | shell, file_read, file_write, file_list, file_search, patch, process |

Surface Overrides

Local surfaces (desktop, cli, tui) override all high-risk tools to Allowed by default. Remote surfaces (telegram, slack, discord) use risk-level defaults -- high-risk tools are denied unless explicitly overridden.

Permission States

| State | Behavior |

|---|---|

allowed | Tool can execute |

denied | Tool is blocked |

ask_once | Prompt user once, remember answer (Phase 2) |

ask_always | Prompt user every time (Phase 2) |

Resolution Flow

Key Files

| File | Purpose |

|---|---|

security/permissions.rs | ToolPermissions, PermissionResolver, PermissionState |

tools/traits.rs | risk_level() method on Tool trait |

config/schema.rs | tool_permissions field in AppConfig |

gateway/handlers/permissions.rs | REST API (4 routes) |

web/src/lib/components/settings/PermissionsSettings.svelte | Settings UI |

web/src/lib/stores/permissions.svelte.ts | Frontend store |

Model Capability Validation

Pre-agent-dispatch check that prevents tool-calling errors with incompatible models.

Flow

Data

- Field:

ModelInfo.supports_tools: bool(defaulttrue) - Storage:

ai_models.supports_toolscolumn (DB migration v8) - API:

POST /providers/{id}/modelsacceptssupports_toolsflag

Key file: ai/agent.rs (capability check in get_or_build_agent())

Agent Delegation

The delegation system allows the main agent to decompose complex tasks into independent sub-tasks, execute them in parallel via isolated sub-agents, and aggregate the results into a unified response.

Key Components

| Component | File | Description |

|---|---|---|

DelegationConfig | ai/delegation/mod.rs | Config: max sub-agents, token budget, timeout, decomposition model |

DelegationTask | ai/delegation/task.rs | Task definition with id, description, tool_allowlist, depends_on |

TaskResult | ai/delegation/task.rs | Per-task outcome: status, output, usage, duration, session_id |

DelegationResult | ai/delegation/task.rs | Aggregated result: all task results + synthesized response + total usage |

TaskStatus | ai/delegation/task.rs | Enum: Pending, Running, Completed, Failed, Cancelled, TimedOut |

SubAgent | ai/delegation/sub_agent.rs | Isolated agent with own session, filtered tools, timeout enforcement |

Coordinator | ai/delegation/coordinator.rs | Orchestrator: decompose, validate, execute waves, cancel, aggregate |

Execution Model

- Dependency waves: Tasks are partitioned into waves based on

depends_onfields. Each wave runs in parallel viaJoinSet. Wave N+1 starts only after wave N completes. - Isolated sessions: Each sub-agent gets a dedicated session with

source: "delegation"for traceability. - Tool filtering: Sub-agents can be restricted to a tool allowlist, or inherit the surface's full permission set.

- Timeout: Per-agent timeout via

tokio::time::timeout, configurable viadelegation_per_agent_timeout_secs. - Cancellation:

Coordinator::cancel(id)aborts all sub-agentJoinHandles for a delegation run.cancel_all()aborts everything.

Config Fields

| Field | Type | Default | Description |

|---|---|---|---|

delegation_max_sub_agents | usize | 4 | Maximum sub-tasks per delegation |

delegation_per_agent_token_budget | usize | 4000 | Token budget per sub-agent |

delegation_per_agent_timeout_secs | u64 | 120 | Timeout per sub-agent in seconds |

delegation_decomposition_model | Option | None | Model override for decomposition LLM call |

Gateway Integration

The ChatRequest struct has an optional delegation: Option<bool> field. When true, the chat handler delegates to Coordinator::delegate() instead of the standard agent flow. Two management routes are always available:

GET /agents/active-- list active delegation run IDsPOST /agents/{id}/cancel-- cancel a delegation run by ID

Delegation System Flow

End-to-end sequence from client WebSocket request through decomposition, parallel execution, and aggregated response. Everything runs on the daemon -- clients are thin renderers of streamed events.

Key points:

- LLM does the decomposition -- the Coordinator sends a meta-prompt to the configured model asking it to break the task into sub-tasks. The LLM decides how many agents, what each does, and what tools each needs.

- WebSocket protocol defines 4 message types:

delegation_started,agent_progress,agent_completed,delegation_completed-- enabling real-time visualization in all clients. - Oneshot channel delivers the final

DelegationResultback to the WS handler for the aggregated text response.

Workflow Engine

The workflow engine provides multi-step automation pipelines defined in TOML, with DAG-based execution ordering, template resolution between steps, retry/timeout policies, and DB-persisted run history. Feature-gated behind workflows.

Key Components

| Component | File | Description |

|---|---|---|

Workflow | workflows/definition.rs | Workflow definition: id, name, steps, optional schedule |

WorkflowStep | workflows/definition.rs | Step with name, type, depends_on, retry config, failure policy, timeout |

StepType | workflows/definition.rs | Enum: Tool, Llm, Condition, Parallel, Delay |

FailurePolicy | workflows/definition.rs | Enum: Stop, Continue, Fallback with step reference |

RetryConfig | workflows/definition.rs | max_retries + retry_delay_ms |

StepOutput | workflows/definition.rs | Per-step result: output string, success, duration, error |

WorkflowRun | workflows/definition.rs | Run record: status, step results, timestamps |

WorkflowRunStatus | workflows/definition.rs | Enum: Running, Completed, Failed, Cancelled |

WorkflowRegistry | workflows/mod.rs | DashMap-backed CRUD + TOML persistence to disk |

WorkflowExecutor | workflows/executor.rs | DAG builder, topological execution, DB persistence, retry/timeout |

dispatch_step | workflows/runtime.rs | Step type dispatcher with template resolution |

resolve | workflows/templates.rs | Minijinja template engine for inter-step data flow |

Step Types

| Type | Description | Template Support |

|---|---|---|

Tool | Execute a registered tool with JSON args | Args are template-resolved |

Llm | Send prompt to LLM | Prompt is template-resolved |

Condition | Evaluate expression, branch to if_true/if_false | Expression is template-resolved |

Parallel | Meta-step referencing parallel sub-steps | N/A |

Delay | Sleep for N seconds | N/A |

Template Resolution

Inter-step data flow uses minijinja templates. Completed step outputs are available via {{ steps.step_name.output }}, {{ steps.step_name.success }}, and {{ steps.step_name.error }}.

Example workflow TOML:

id = "daily-report"

name = "Daily Report"

description = "Fetch news and summarize"

[[steps]]

name = "fetch"

type = "tool"

tool = "web_search"

[steps.args]

query = "latest tech news"

[[steps]]

name = "summarize"

type = "llm"

prompt = "Summarize: {{ steps.fetch.output }}"

depends_on = ["fetch"]

Failure Policies

| Policy | Behavior |

|---|---|

Stop (default) | Workflow fails immediately on step failure |

Continue | Skip failed step, continue to next |

Fallback { step } | Execute named fallback step on failure |

Config Fields

| Field | Type | Default | Description |

|---|---|---|---|

workflow_dir | Option | None | Workflow TOML directory (default: data_dir/workflows) |

workflow_max_concurrent | usize | 5 | Max concurrent workflow runs |

workflow_max_steps | usize | 50 | Max steps per workflow |

workflow_step_timeout_secs | u64 | 300 | Default step timeout in seconds |

workflow_step_max_retries | u32 | 3 | Default step retry count |

DB Schema

workflow_runs-- run history: id, workflow_id, workflow_name, status, started_at, completed_at, errorworkflow_step_results-- per-step results: id, run_id, step_name, output, success, duration_ms, error, executed_at

MCP Integration

Zenii supports the Model Context Protocol as both a server (exposing tools to external AI agents) and a client (consuming tools from external MCP servers).

MCP Server Architecture

Key components:

ZeniiMcpServer— implementsrmcp::ServerHandlermanually (tools are dynamic fromToolRegistry, not static)convertmodule — bidirectional conversion between ZeniiToolInfo/ToolResultand rmcpTool/CallToolResult- Security enforcement — every

call_toolgoes throughSecurityPolicy::validate_tool_execution() - Tool filtering — configurable

mcp_server_exposed_tools(allowlist) andmcp_server_hidden_tools(denylist) - Tool prefix — all tools exposed with

zenii_prefix (configurable viamcp_server_tool_prefix)

Files:

crates/zenii-core/src/mcp/server.rs—ZeniiMcpServerhandlercrates/zenii-core/src/mcp/convert.rs— type conversionscrates/zenii-mcp-server/src/main.rs— thin binary (~75 lines)

MCP Client Architecture

Key components:

McpClientManager— spawns external MCP servers as child processes and connects via stdio (HTTP transport is planned but not yet implemented)- Persistent sessions — each server's

Peer<RoleClient>is stored and reused; sessions are kept alive in background tasks (no per-call respawn) - Timeouts: 15 s connect, 10 s tool discovery, 60 s per tool call

McpClientTool— wraps a remote MCP tool as a ZeniiTool(RiskLevel::Medium), forwardingcall_toolvia the live session and returningToolResult- Tool prefixing — optional

tools_prefixper server (e.g.,"github/"→"github/list_repos") - Resilient startup — servers that fail to connect are skipped with a warning; the agent still starts

- Configuration via

mcp_client_serversarray inconfig.toml

Config schema:

[[mcp_client_servers]]

id = "github"

tools_prefix = "github/"

enabled = true

[mcp_client_servers.transport]

type = "stdio"

command = "npx"

args = ["-y", "@modelcontextprotocol/server-github"]

Note: HTTP/SSE transport is defined in the schema but not yet implemented. Attempts to use it return an error.

Session lifecycle:

boot → McpClientManager::connect_all()

├─ spawn child process (stdio)

├─ rmcp handshake (15s timeout)

├─ list_all_tools() (10s timeout)

├─ store Peer<RoleClient> in HashMap

└─ background task keeps session alive indefinitely

call → peer.call_tool() via stored Peer (60s timeout)

shutdown → tokio aborts background tasks → child process exits

Files:

crates/zenii-core/src/mcp/client.rs—McpClientManagercrates/zenii-core/src/tools/mcp_client_tool.rs—McpClientTool

Settings UI:

web/src/lib/components/settings/McpSettings.svelte— MCP tab in Settings with server sub-tab (connection snippets + tool visibility) and clients sub-tab (add/edit/delete/toggle servers with 5 quick-add presets)

A2A Agent Card

The GET /.well-known/agent.json endpoint serves an A2A Agent Card for agent-to-agent discovery. This is a public endpoint (no auth required), served before the auth middleware layer alongside /health.

File: crates/zenii-core/src/gateway/handlers/agent_card.rs

Feature Gates

| Feature | What It Enables | New Deps |

|---|---|---|

mcp-server | ZeniiMcpServer, convert module | rmcp, schemars |

mcp-client | McpClientManager, rig-core rmcp integration | rmcp (+ rig-core/rmcp) |

Neither feature is in the default set — zero size impact on existing binaries.

Concurrency Rules

These rules are enforced across the entire codebase to prevent async runtime issues.

| Rule | Rationale |

|---|---|

No std::sync::Mutex in async paths | Blocks the tokio runtime; use tokio::sync::Mutex or DashMap |

No block_on() anywhere | Panics inside tokio runtime; use tokio::spawn or .await |

All SQLite ops via spawn_blocking | rusqlite is synchronous; blocking in async context starves tasks |

All errors are ZeniiError | No Result<T, String>; use thiserror enum with typed variants |

AppState is Clone + Arc<T> | Shared across axum handlers without lifetime issues |

EventBus uses tokio::sync::broadcast | Lock-free fan-out to all subscribers |

Never hold async locks across .await | Prevents deadlocks; acquire, use, drop before yielding |

Lessons Learned from v1

Key architectural mistakes from Zenii v1 and how v2 prevents them.

| v1 Mistake | v2 Prevention |

|---|---|

std::sync::Mutex in async code | tokio::sync::Mutex or DashMap exclusively |

block_on() in event loop | Zero block_on() calls; tokio::spawn for sync work |

Result<T, String> everywhere | ZeniiError enum with thiserror |

| Custom AI layer (1400 LOC) | rig-core (battle-tested, 18 providers) |

| 21 Zustand stores | 6 Svelte 5 rune stores ($state), single WS connection |

| 165 IPC commands (Tauri v1) | Gateway-only architecture (~40 HTTP routes) |

| OKLCH color functions in CSS | Pre-computed hex values only |

| useEffect soup (React) | Single $effect per Svelte component, reactive stores |

| 13-phase boot sequence | Single init_services() in boot.rs |